Core ML & On-Device ML Consulting

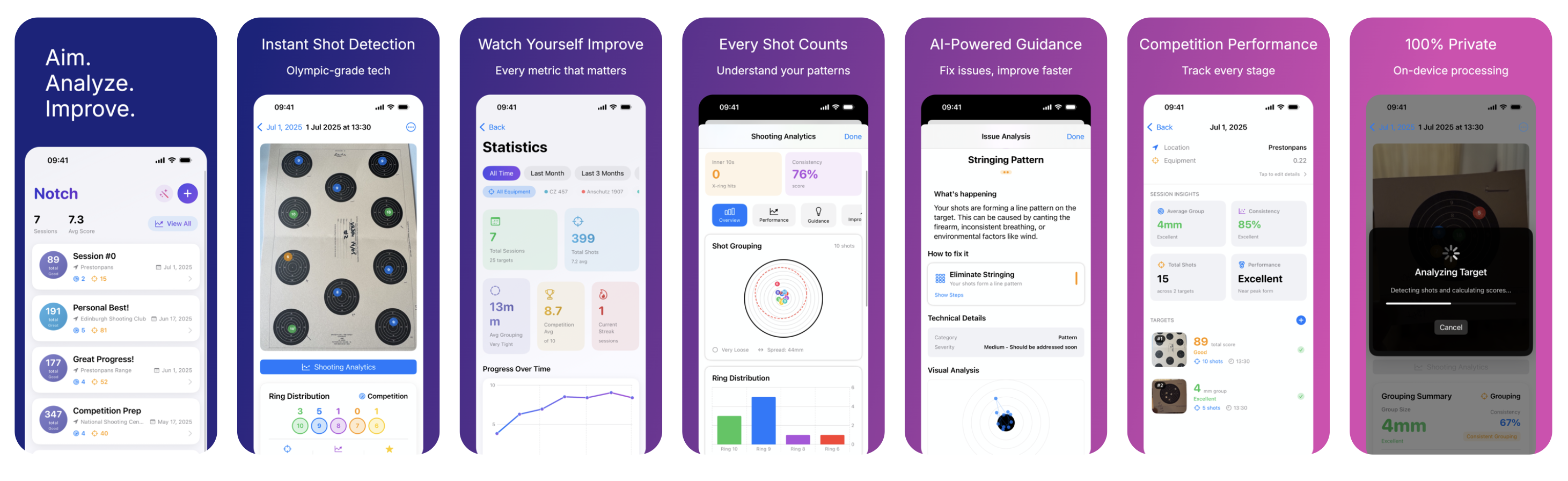

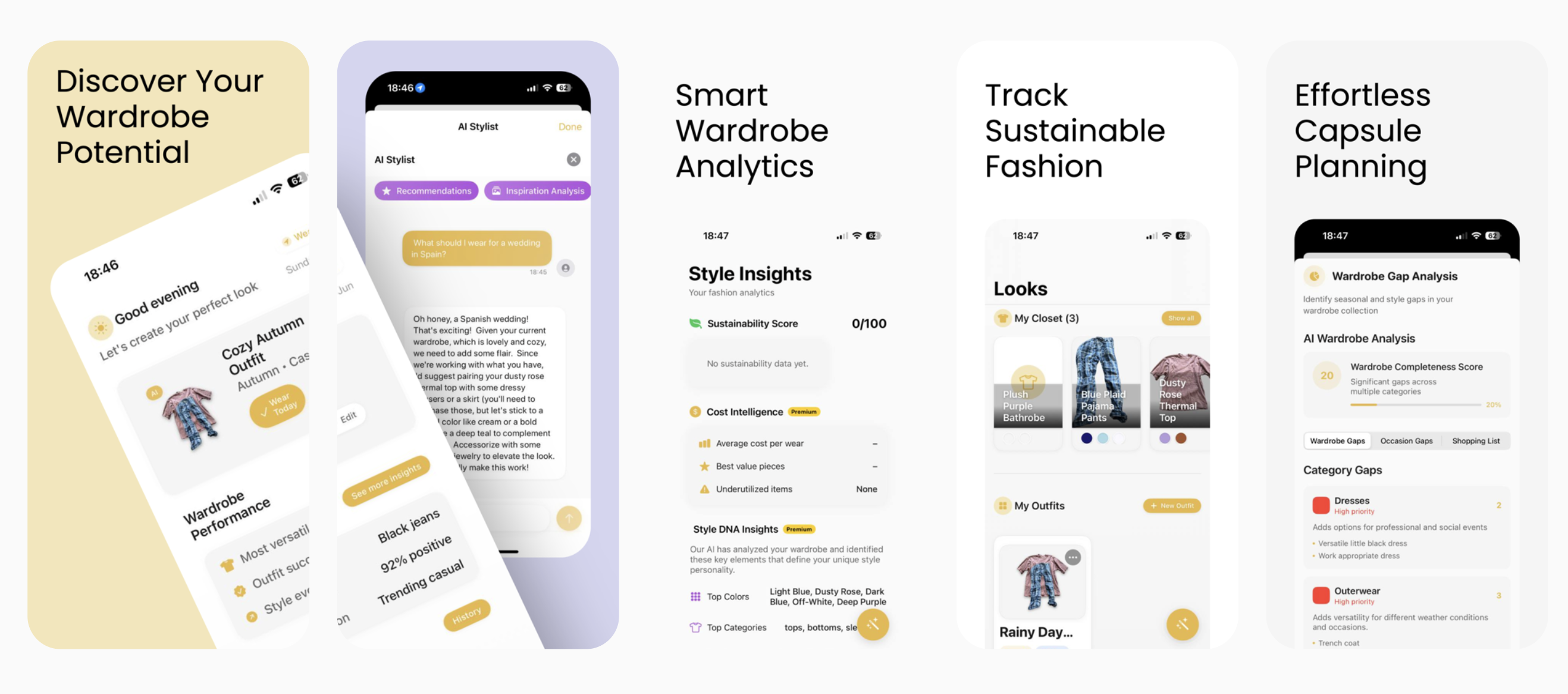

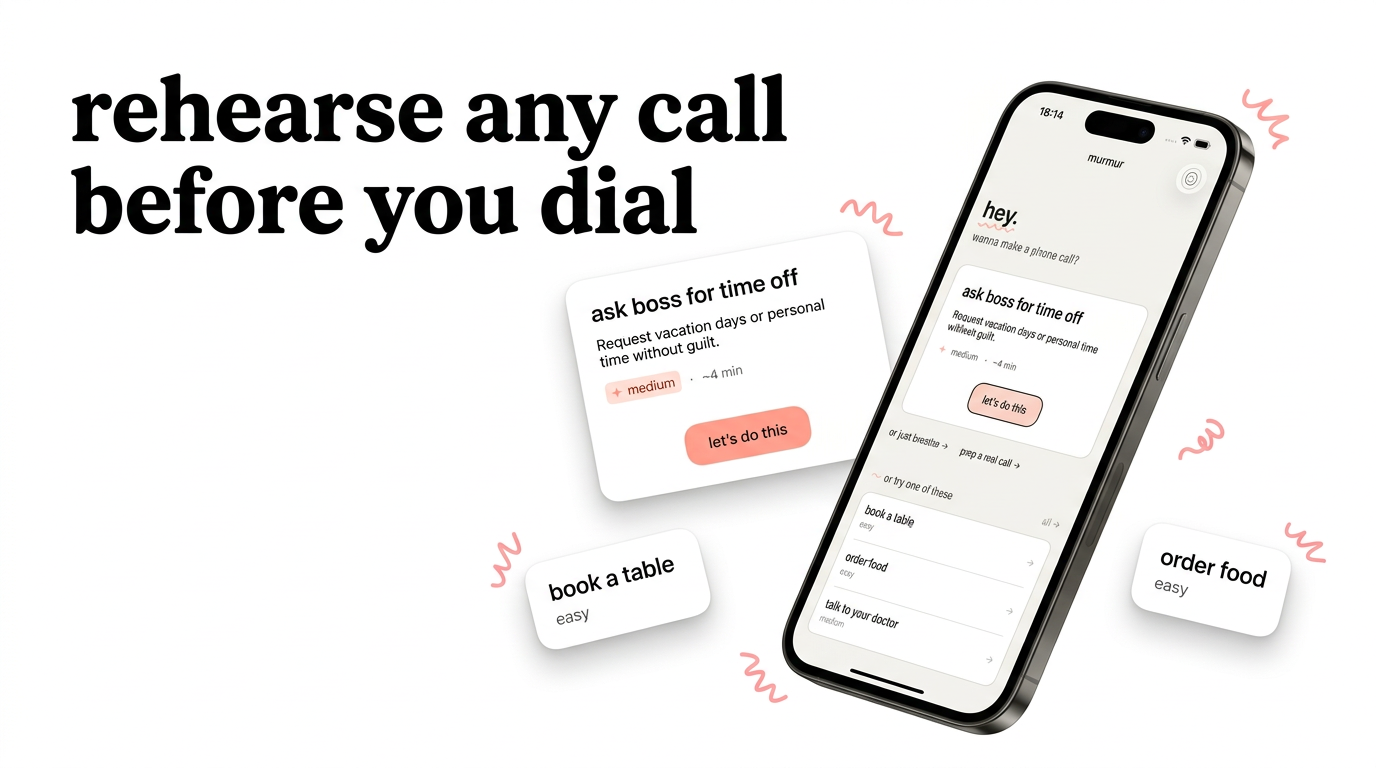

Vision, Core ML, Natural Language, on-device voice. Models that run locally without the latency, privacy cost, or cloud bill of a server round-trip. I've built three of my own apps whose entire value is on-device inference.

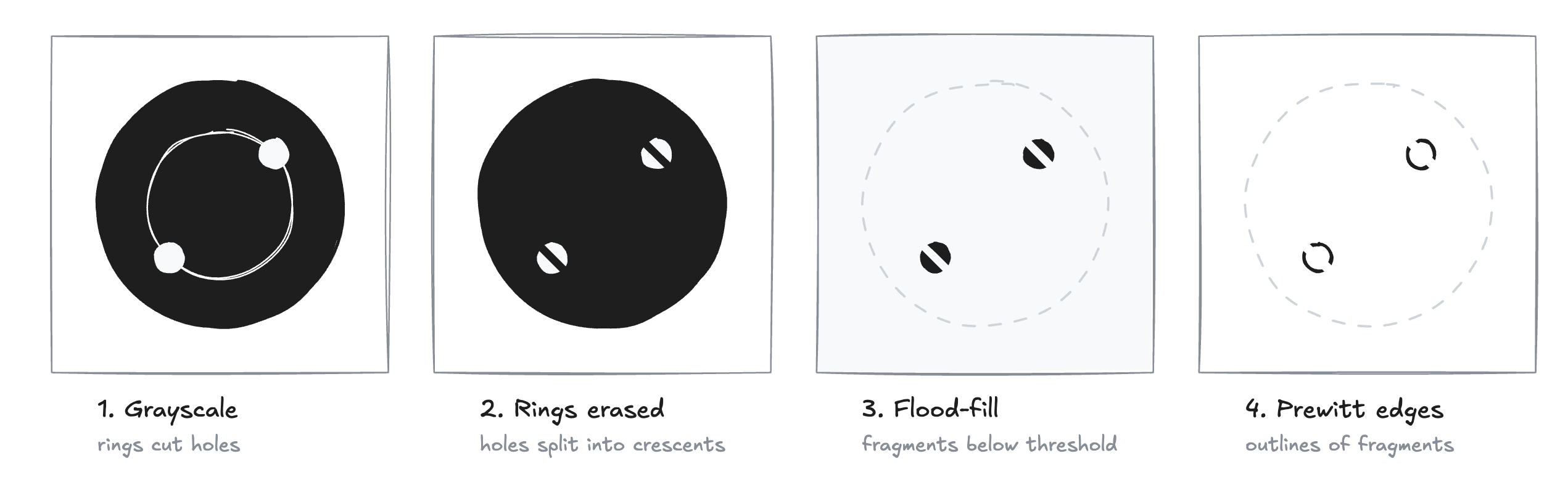

- on-device Vision and Core ML pipelines with sub-100ms latency budgets

- PyTorch/TensorFlow → Core ML conversion, quantization, model size reduction

- on-device voice AI with ElevenLabs fallback for real-time conversations

What clients say

"Vadim was instrumental to the success Epsy enjoyed on iOS, taking it from an idea on a Miro board to the highest rated and most downloaded app of its kind on the store."

James C. · Mobile Engineering Lead, Epsy

"We had a strict deadline, and Vadim managed to complete the job in time. He gave us meaningful feedback and suggested better approaches, not trying to blindly stick to our specification."

Founder · Pre-seed streaming service

"I can say with confidence that it will be difficult to find a better developer. Vadim is achievement-oriented, highly organized, with very good communication skills."

Alex Z. · Co-Founder, eda.so

Related work

Common engagements

Integrate an existing model

2-4 weeks end-to-end. I write the glue code, run device-class testing from iPhone 12 through the current generation, design the update strategy (bundled vs fetched), and plan the fallback for when inference fails. The fallback matters more than the happy path.

Ship a PyTorch/TF model to Core ML

I convert the model via coremltools, debug the inevitable 'it converts but behaves differently' step, and add version-gate hygiene so the next iOS doesn't silently break inference.

Architect a new ML-backed feature

Product brief in, architecture out. I tell you whether on-device is the right call and what the fallback looks like.

Pricing

Architecture reviews, hiring help, second opinions on that thing that's been bugging you.

Available nowFeatures, MVPs, migrations, firefighting. Minimum 5 days.

Available nowPriority support: review agency code, join architecture calls, catch problems before they ship.

Questions

How do I decide between on-device and server-side?

On-device wins when any of these apply: the feature must work offline, latency needs to be under ~100ms, per-inference cost matters, or you can't send the data off-device. If none apply, server-side is usually cheaper and more maintainable.

Will the model fit in our app?

Almost always yes. The sharper question is whether it still fits after the next three features on the roadmap. I review your binary budget and model options before you commit.

We need to update our model after launch. How does that work?

Two clean options: bake the model into the binary (every update is an App Store cycle) or fetch at runtime with integrity checks, caching, and a fallback path. Teams that pick neither and combine both end up with bugs neither approach would produce on its own.

We have a model that works in Python. Can you ship it in our iOS app?

Yes. The conversion from PyTorch or TensorFlow to Core ML is the part most teams underestimate: outputs drift from the source model, some layers don't convert cleanly, model size and inference speed surface as ship blockers. I handle the conversion, device-class testing across iPhone generations, and the fallback path for when on-device inference fails. 2-4 weeks end-to-end.

How quickly can you start?

Advisory calls can happen within days. For project work, I typically need 1-2 weeks notice to clear the calendar, though I keep some buffer for urgent firefighting. Check the availability badges above for current openings.

Do you work with early-stage startups?

Yes, from pre-seed to Series C and beyond. For very early teams, the advisory tier often makes more sense than project work: you get architecture guidance without committing to a large engagement before you've validated the product.

What's included in the day rate?

Everything: code, architecture decisions, code review, documentation, async Slack availability during working hours. No surprise add-ons. I bill for time spent working on your project, not for "thinking about it in the shower."

We're in a different timezone. Will that slow things down?

I'm currently in Vancouver (PST), with full overlap for North American teams. For UK and Europe, I'm online by their afternoon. For Gulf or APAC, we'd agree on overlap hours and handle the rest async. I've worked with teams from San Francisco to Dubai.

Areas I cover

Where I've worked CV · LinkedIn

Shipping an on-device ML feature?

Tell me what you're working on. I reply within 48 hours.

work@drobinin.com